Anyone who has played enough CS2 knows why this matters.

The problem is not only blatant spinbotters. Those are easy. The real damage comes from the gray area: the player who always seems to know the stack, the crosshair that arrives too cleanly too often, the “smart” peek that stops looking smart when you watch the demo back a second time. That is where trust in matchmaking starts to break down.

Valve Anti-Cheat is still part of the picture, and we surface official Steam ban information because it matters. But the broader frustration in the Counter-Strike community has been building for a long time. Players do not just want to know whether someone was banned eventually. They want to feel that suspicious behavior is being taken seriously while the match, the season, and the grind still matter.

At the same time, anti-cheat is not as simple as “ban faster.” Counter-Strike has also seen the other side of the problem: false positives and overcorrections. That is exactly why we did not want to build a system that looks at one strange clip and jumps straight to a verdict. If you care about competitive integrity, you also have to care about being right.

That is the thinking behind our integrity review system.

We built it because VAC alone does not answer the question our players are asking. The question is not only, “Was this person banned by Valve?” The question is, “What does their actual play pattern look like over time, and does it deserve closer review?”

So our first step was not punishment. It was evidence

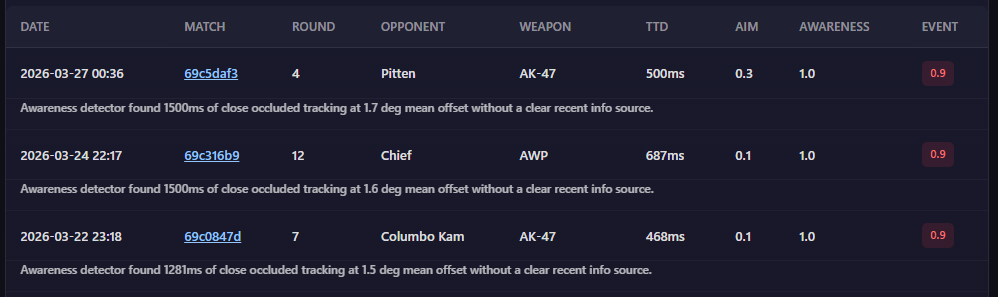

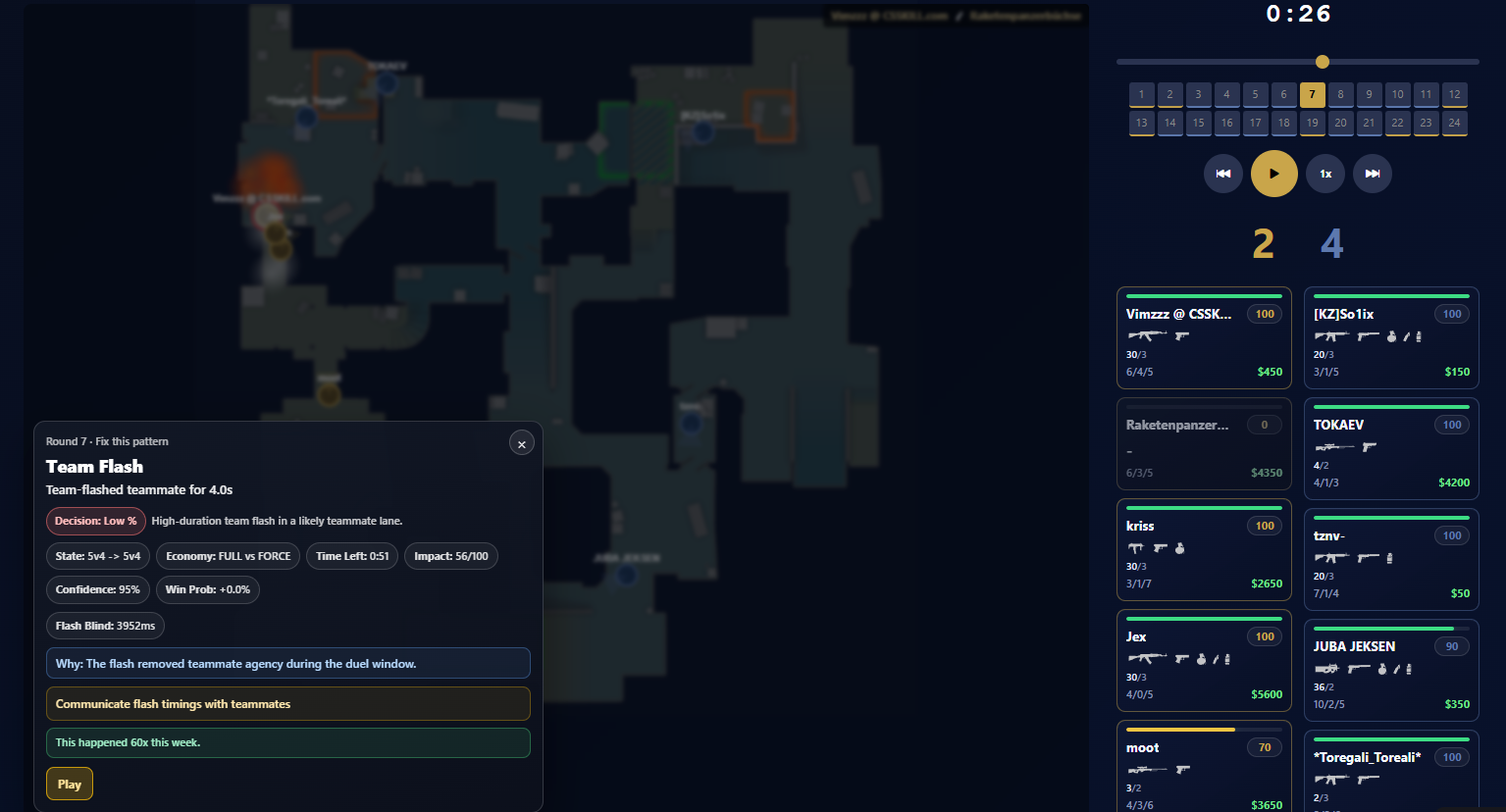

Today, our system is focused on data gathering for manual review. When we parse CS2 demos, we do more than collect scoreboard stats. We generate integrity evidence around real engagements: how quickly a player reacts after visibility begins, whether there was suspicious tracking before an enemy was visible, how large the first correction was, how quickly damage followed visual confirmation, what the crosshair placement looked like, how many enemies were visible, whether utility was used before contact, and whether there were plausible information sources that could explain the decision.

From there, we separate suspicion into two broad buckets.

The first is aim-related suspicion: snaps, corrections, and engagement patterns that deserve a closer look.

The second is awareness-related suspicion: moments where a player appears to track or pre-empt information they should not realistically have.

That distinction matters. A lot of anti-cheat conversation in the community collapses everything into “cheating” as if it is one behavior. It is not. Someone can look mechanically normal and still make deeply suspicious information plays. Someone else can have cracked aim but perfectly legitimate decision-making. If you want a system that reviewers can actually trust, you need to be more precise than a single score with no explanation.

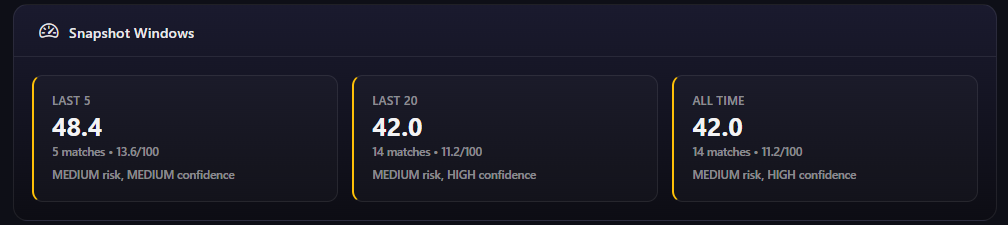

That is why our integrity layer does not stop at isolated moments. It builds summaries per match, then rolls those summaries into longer windows like recent matches and larger historical samples. The reason is simple: one insane round proves almost nothing in Counter-Strike. Repeated anomalies across matches are a very different story.

This is also why human review remains central to the system.

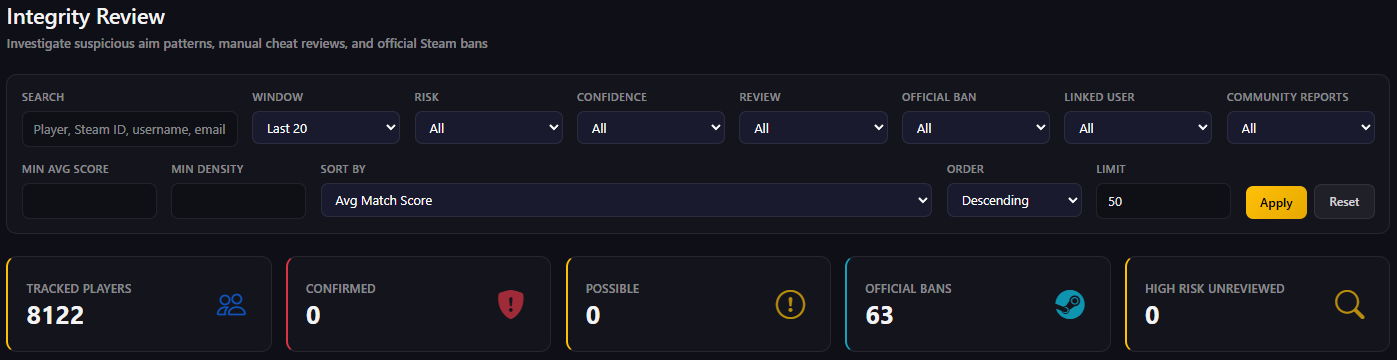

Our reviewers are not looking for one miracle clip. They are looking for patterns. Is the suspicious behavior recurring? Is the sample size meaningful? Is the confidence high enough? Do the raw events support the aggregate score? Is there official Steam ban data that adds context? That is the level we want to operate on, because competitive integrity is too important to reduce to gut feeling.

In practical terms, our system currently works as a layered review pipeline.

- First, parsed demos produce raw integrity events.

- Then those events are turned into per-match summaries.

- Then those summaries are aggregated into rolling player snapshots so we can see whether suspicious behavior is isolated or persistent.

- Then our operators can review those cases with the actual evidence in front of them.

And alongside all of that, we sync official Steam/VAC ban data so we can display the external signal too, instead of pretending our system exists in a vacuum.

That structure is intentional. It gives us something the broader anti-cheat conversation often lacks: explainability.

If we are going to label a player as suspicious, there has to be a reason. If we are going to escalate a review, there has to be a pattern. And if we are going to improve detection over time, we need structured evidence we can learn from, not just forum anger and post-match guesswork.

That last point is where this is going

Right now, the system is primarily about collecting good data and supporting manual review. That is the correct phase for it. But the long-term goal is to use that evidence to train and refine better learning systems that can flag suspicious behavior more accurately, faster, and with more context than a simple binary anti-cheat outcome.

There is active research moving in this direction across FPS anti-cheat: server-side analysis, explainable detection, behavior-based models, and systems designed to help reviewers understand why something is suspicious instead of just outputting a hidden score. That is the lane we believe in.

Because the truth is, players are tired of being asked to choose between two bad options: trust a system they do not believe in, or accuse everyone who outplays them.

We think there is a better middle ground

VAC still matters. Official bans still matter. But trust in a competitive game is earned through transparency, evidence, and consistency. That is why we built our integrity review system. Not to claim we have solved cheating overnight, and not to replace human judgment, but to give ourselves and our players a stronger way to see suspicious behavior clearly, review it responsibly, and keep improving from real match data instead of wishful thinking.

Counter-Strike deserves that. So do the players still grinding through it.